CS 111 - Lecture 3 Notes

Scribe Notes for Lecture 3 - April 6th, 2010

Author(s): Eric Wang

Problems with standalone application example from Lecture 2

1. Redundancy

From the previous example in lecture 2, we described an identical read_sector() function that is utilized across all layers of abstraction in our operating system. However, by rewriting the exact same function multiple times, we are wasting valuable memory space since we are effectively duplicating the code in the MBR, VBR, application, and BIOS.

What if we eliminated duplicate code and allowed the function to lie in the lowest layer, the BIOS?

Trade-offs:

+ 1 copy, 1 maintainer - Centralized location to manage, debug, and modify code

+ Standardized - We force all subsequent code to abide by our function's assumptions

- Inflexible, unportable - Function may not behave exactly the same on another platform with another BIOS

2. Efficiency

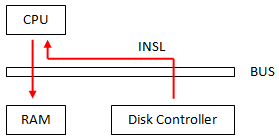

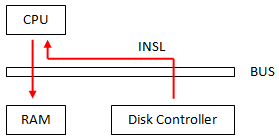

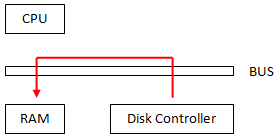

Another critical issue lies in the system's bus performance. In our original model, fetching data from a disk controller into the RAM is very inefficient. We would have to use the INSL function to first copy data from the disk to CPU, then from CPU to RAM. This is shown in the diagram below:

|

Use INSL function to copy from disk to CPU to RAM |

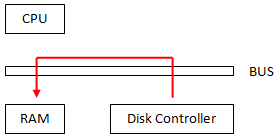

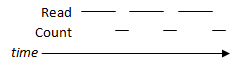

What if we could directly transfer data from disk to memory? We can do so via Direct Memory Access, as seen below:

|

Direct Memory Access (DMA) trade-offs:

+ More parallelism - Lets CPU do other "more important" work

+ Less bus contention - Less traffic on the bus

+ Faster - Lower latency

- More Complex - Intercommunication between devices is complicated |

3. Speed

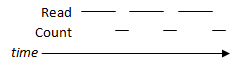

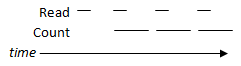

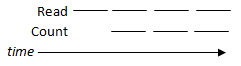

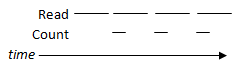

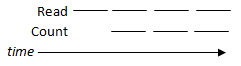

Following a simple model, the timeline of reading a segment of data and counting it would look like the following:

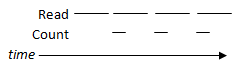

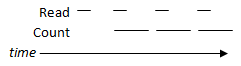

Clearly, processes that ran in this manner would have to wait until a current operation is done before another can proceed. How do we make this more efficient? Pipelining access and processing times via overlapping processes!

We see that overlapping is faster regardless of whether counting is faster, slower, or equal to read speeds.

4. Development

Regarded as the biggest of these four issues, developing a single application is too hard to maintain.

Particularly, it is very difficult ...

- to change

- to reuse parts in other programs

- to run several programs simultaneously

- to recover from faults

How do we cope with complexity?

As we've seen from the aforementioned problems, applications can quickly become difficult to write if we don't organize it well. To deal with the complexity, we focus on two key designs aspects:

- Modularity - Break problem into self-functioning pieces

- Abstraction - Have "nice" boundaries between functional pieces, where roles are clearly defined

The advantages of adopting this kind of design can drastically improve the application's development time. Take the following for example:

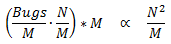

Suppose our application has N lines of code, M modules, where the number of bugs ∝ M, and the

time to find/fix the bugs ∝ N.

Following our "single application" model, the result is: Debug time ∝ N²

If we follow a modular design, our debug time then becomes:

(this assumes bugs can be easily isolated to certain modules).

(this assumes bugs can be easily isolated to certain modules).

Clearly, we can see the benefits of modular design!

Key considerations with modularity

Modularity can have either good or bad effects on an application's design. We break down the trade-offs with the following metrics:

| A. Performance |

Does modular perform better than unitary app?

Modularization usually costs performance (we want this to be small) |

| B. Robustness |

Well designed module, should work well in harsh environments.

(Tolerance of faults, faillures, etc) |

| C. Simplicity |

Easy to learn/use, small manual |

D. Portability / Neutrality / Flexibility / Lack of Assumptions |

Let components be used in any way and different applications |

A closer look at the "read" function

Lets take a look at the function call of the read function:

void read_sector (int s, char *a)

In regard to the earlier considerations in this lecture, we make the following changes to make it more modular and abstract:

- void read_sector to int read_sector - A read function should indicate whether it was successful or not

- int s to secno_t - Hard-coding "int" may be ill-advised (e.g. 64-bit). Let the system determine the type

- unsigned sec_offset to secoff_t type - Instead of using a specific sector, we specify an offset

- Insert a new parameter size_t bytes - This indicates the size to read from the offset location

Applying these changes, we now have a much more robust function that can be used in more scenarios:

int read( off_t b, char *a, size_t nbytes );

To make this even more flexible for different kinds of reading applications (e.g. network sockets, hard disks, tape drives, RAM), we would implement a file descriptor parameter in lieu of a sector number. The result is the POSIX standard commands:

ssize_t read(int fd, char *a, size_t nbytes);

off_t lseek(int fd, off_t b, int flag);

reade(int fd, off_t b, char *a, size_t nbytes);

"Read" has two properties:

- Virtualization - virtual machine is controlled by it (not a real machine)

- Abstraction - It's simpler than the underlying machine

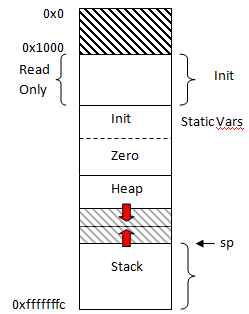

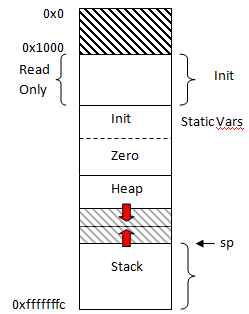

By following these principles, each application is given its own virtual address space (virtual memory discussed in later lectures). Effectively, each application sees the following memory allocations available, where the heap and stack grows as needed.

How to enforce modularity (+ virtualization)

- None

- Function calls + C modules

- Memory exhaustion

- Callee can step all over memory

This is considered SOFT modularity. However, for a truly efficient system, we want HARD modularity so that no matter how badly caller screws up, callee keeps working.