Abstract

This paper reports on educational assessment: measures to validate that a subject has been learned. Outcomes described are from actual UCLA Computer Science courses, but the approach is independent of subject matter. There is a bibliography describing application of the methods presented here to other subjects and school levels. That bibliography summarizes an extensive literature including assessment in distance learning and elementary school situations. The text here outlines ideas and derivations, and the references enable deeper understanding, but a reader can use these procedures without either.

The methods are not the author's original invention. The following describes a way to apply them and to display students' learning. The paper contributes new ways to indicate student achievement and distinguish individuals with subject mastery from others tested. This is by figures shown here that enable teachers and students to understand and apply this form of testing.

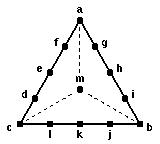

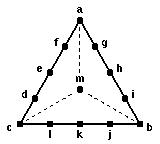

The measures use probability and are based on concepts of information. Although both are discussed in the outline of derivations, it is unnecessary to understand either concept to test this way. A summary figure, the discussion of a practical implementation, and the references presented give the reader ability to vary weights shown here. That summary figure displays scoring based on logarithmically weighting the subjective or belief probability when individuals make one of thirteen possible responses. These are to large numbers of questions that can be completed with any of three candidate statements, one obviously false to anyone who knows the material. Three possible answers are intermediate between the remaining possibly true statement completions. They enable expression of partial knowledge, relative belief that either of the other two are more likely true or an inability to distinguish between them.

Each of the thirteen answers can readily be understood as subjective probability statements. The summary figure relates their weights to statistical concepts such as risk and loss. This applies to guiding students to answer with their true beliefs. Otherwise such mathematical ideas are not needed by testing participants. The practical effect is that thirteen responses enable converting three alternative statement completion questions into a means to evaluate whether complex material has been absorbed.

The method is generally applicable. It is related to earlier work on a computerized learning system called Plato that handled a wide variety of subjects at many educational levels. This paper describes ways to use an unconventional assessment approach to rapidly determine concepts not yet absorbed. New methods presented here are those I developed in classes I taught. Ideas and tools in this paper could empower others to expand and enrich their teaching and the learning processes it is to assist.

Acknowledgement. Stephen Seidman commented on earlier drafts. Many thanks to him and others who supported this work: James Bruno, Martin Milden, Thomas A. Brown, E. Richard Hilton, Charles W. Turner, and David Patterson.

Assessment methodology is usually thought of as divided between thorough methods that involve time consuming evaluation of student essays and rapidly scored multiple choice tests. There is a third way. It involves multiple choice where response corresponds to beliefs. That approach turns questions into a subtle tool to evaluate how much is known.

Knowledge and information are similar so one could start with logarithmic weighting [1-3]. Information theory uses that weighting to measure received transmissions. There probability is associated to different messages. The most is learned when one knows which specific value was sent from a group that were equally probable. Decision theory [4, pp. 14-15] adds the ideas of loss and risk. Risk involves average loss: it enables rational choice between actions when one expresses relative certainty about knowledge.

While a background in probability, statistics, information theory and decision theory is useful, that is not necessary either to design or take tests that use procedures explained below. A summary of the key weights I've made part of class use appears here as Figure 1. The following describes how those values relate to statements about belief in answers. Explanations relate them to logarithmic weighting and minimizing risk, something that translates into the traditional effort to gain the maximum score on a test.

THIRTEEN RESPONSES |

CHOICES |

If totally sure use a definite, a, b, c response.

If totally unsure use an m response.

If see one definitely wrong a, b, c value, choose among the remaining five on the opposite line.

Indicate preference between two values by d, f, g, i, j, l responses.

Indicate uncertainty between two values by e, h, k

responses.

| Choice | Letter | Points | Fraction |

|---|---|---|---|

| Correct | a b c | 30 | 1.000 |

| Wrong | -100 | 0.000 | |

| Uninformed | m | 0 | 0.769 |

| Near Right | d f g i j l | 20 | 0.923 |

| Between Two | e h k | 10 | 0.846 |

| Near Wrong | d f g i j l | -10 | 0.692 |

| a | b | c | |

|---|---|---|---|

| a | 30 | -100 | -100 |

| b | -100 | 30 | -100 |

| c | -100 | -100 | 30 |

| d | -10 | -100 | 20 |

| e | 10 | -100 | 10 |

| f | 20 | -100 | -10 |

| g | 20 | -10 | -100 |

| h | 10 | 10 | -100 |

| i | -10 | 20 | -100 |

| j | -100 | 20 | -10 |

| k | -100 | 10 | 10 |

| l | -100 | -10 | 20 |

| m | 0 | 0 | 0 |

The definition of risk is expected loss.

Rows indicate points given to a through m answers when the respective column headings are true.

The loss-matrix values cause minimum risk only when a, b, c is answered when more than 90% certain. Otherwise there is a strong preference for an m response. Thus unless truly knowledgeable about the test question, students not following the rule "answer a, b, c only when certain" fall below the upper levels.