- Mfm: 3D motion from 2D motion causally integrated over time (submitted, 2000)

- A real-time system for 3D motion estimation (to appear IEEE CVPR 2000)

For academic purpose only.

Despite the wealth of algorithms to estimate 3-D structure

from image motion (SFM), none of them has proven effective on real-world

sequences without user intervention. While the geometry of SFM is by now

fairly well understood, the role of noise has been studied only superficially,

and the interplay between geometry and

noise almost completely ignored. Therefore, there is

an urgent need to study the fundamental limitations on the quality of the

reconstruction from the point of view of noise, with the aid of both analysis

and experimentation. Analysis will involve studying the local sensitivity

of the optimization underlying the problem of SFM as well as the

global characterization of extrema. The analysis will

then guide a thorough experimental assessment of the performance, robustness,

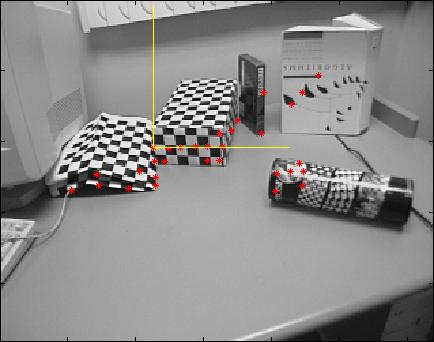

sensitivity and domain of convergence of SFM algorithms. The real-time

implementation will, for the first time, allow extensive experimental validation

of SFM algorithms.

Real scenes are rarely static. Therefore, the problem of segmenting a visual scene into portions which move according to the same motion model is extremely important towards the feasibility of vision as a sensor for control systems operating in non-trivial environments. At this stage, various consistency criteria will be tested: (i) metric (norm of the estimation residual), (ii) graph-theoretic (partitioning and normalized cuttings) and (iii) statistical. The interplay between image-plane 2D segmentation, and 3D motion segmentation will also be investigated.

The integration of the feature tracking, structure from motion, and segmentation modules into the complete system presents some novel issues that have both practical and theoretical relevance. The feed-forward of local inconsistency in the feature tracking provides hypotheses for the segmentation. Predictions in the segmentation (high-level) are fed back to the feature selection (low-level) to both validate the measurements, and to guide the selection of new features for robustness and speed. Information is also fed to the structure from motion module. The overall system will thus implement a complex control system where information is represented at different levels of granularity.