VisualBERT: A Simple and Performant Baseline for Vision and Language

Liunian Harold Li, Mark Yatskar, Da Yin, Cho-Jui Hsieh, and Kai-Wei Chang, in Arxiv, 2019.

CodeDownload the full text

Abstract

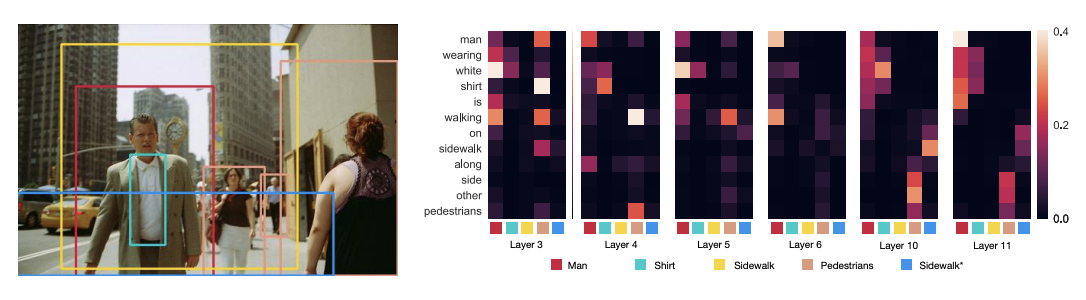

We propose VisualBERT, a simple and flexible framework for modeling a broad range of vision-and-language tasks. VisualBERT consists of a stack of Transformer layers that implicitly align elements of an input text and regions in an associated input image with self-attention. We further propose two visually-grounded language model objectives for pre-training VisualBERT on image caption data. Experiments on four vision-and-language tasks including VQA, VCR, NLVR2, and Flickr30K show that VisualBERT outperforms or rivals with state-of-the-art models while being significantly simpler. Further analysis demonstrates that VisualBERT can ground elements of language to image regions without any explicit supervision and is even sensitive to syntactic relationships, tracking, for example, associations between verbs and image regions corresponding to their arguments.

hot off the press -- VisualBert: A simple and performant baseline for vision and language. Language + image region proposals -> stack of Transformers + pretrain on captions = SOTA or near on 4 V&L problems. https://t.co/uQ4O2Jhe2S @LiLiunian +Cho-Jui Hsieh +Da Yin @kaiwei_chang

— Mark Yatskar (@yatskar) August 12, 2019

VisualBERT is intergrated into Facebook MMF library

Please see more anlaysis about VisualBERT in a recent paper by A. Singh, V. Goswami, V., and D. Parikh (2019)

VisualBERT is used as a baseline in Hateful Memes by Facebook Research

Bib Entry

@inproceedings{li2019visualbert,

author = {Li, Liunian Harold and Yatskar, Mark and Yin, Da and Hsieh, Cho-Jui and Chang, Kai-Wei},

title = {VisualBERT: A Simple and Performant Baseline for Vision and Language},

booktitle = {Arxiv},

year = {2019}

}

Related Publications

- Broaden the Vision: Geo-Diverse Visual Commonsense Reasoning, EMNLP, 2021

- Unsupervised Vision-and-Language Pre-training Without Parallel Images and Captions, NAACL, 2021

- What Does BERT with Vision Look At?, ACL (short), 2020