Context Attentive Document Ranking and Query Suggestion

Wasi Ahmad, Kai-Wei Chang, and Hongning Wang, in SIGIR, 2019.

Slides CodeDownload the full text

Abstract

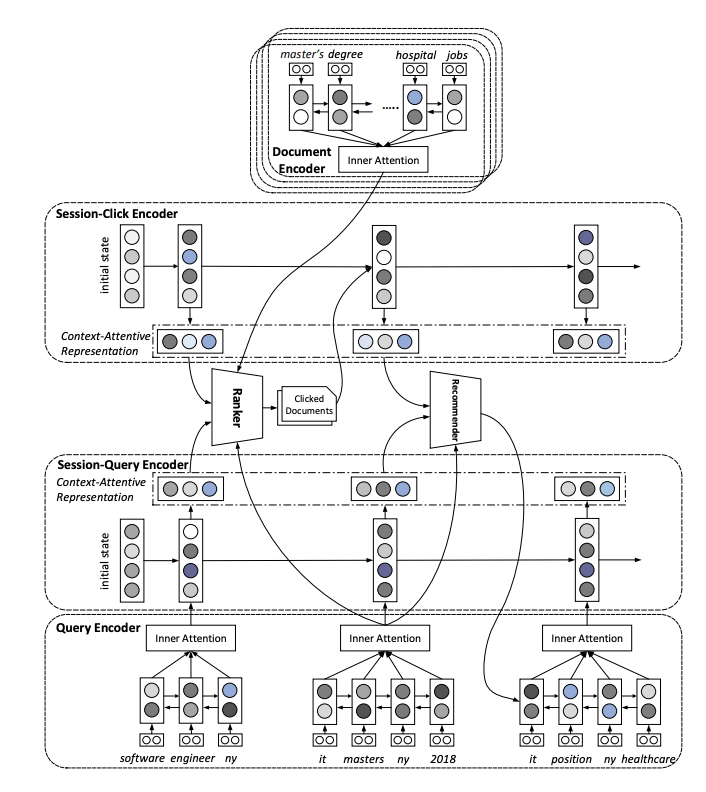

We present a context-aware neural ranking model to exploit users’ on-task search activities and enhance retrieval performance. Inparticular, a two-level hierarchical recurrent neural network isintroduced to learn search context representation of individualqueries, search tasks, and corresponding dependency structure byjointly optimizing two companion retrieval tasks: document rank-ing and query suggestion. To identify variable dependency structurebetween search context and users’ ongoing search activities, at-tention at both levels of recurrent states are introduced. Extensiveexperiment comparisons against a rich set of baseline methods andan in-depth ablation analysis confirm the value of our proposedapproach for modeling search context buried in search tasks.

Bib Entry

@inproceedings{ahmad2019context,

author = {Ahmad, Wasi and Chang, Kai-Wei and Wang, Hongning},

title = {Context Attentive Document Ranking and Query Suggestion},

booktitle = {SIGIR},

year = {2019}

}

Related Publications

- A Transformer-based Approach for Source Code Summarization, ACL (short), 2020

- Multifaceted Protein-Protein Interaction Prediction Based on Siamese Residual RCNN, ISMB, 2019

- Multi-Task Learning for Document Ranking and Query Suggestion, ICLR, 2018

- Intent-aware Query Obfuscation for Privacy Protection in Personalized Web Search, SIGIR, 2018

- Counterexamples for Robotic Planning Explained in Structured Language, ICRA, 2018

- Word and sentence embedding tools to measure semantic similarity of Gene Ontology terms by their definitions, Journal of Computational Biology, 2018