Learning to Search for Dependencies

Kai-Wei Chang, He He, Hal Daume; III, and John Lanford, in Arxiv, 2015.

CodeDownload the full text

Abstract

We demonstrate that a dependency parser can be built using a credit assignment compiler which removes the burden of worrying about low-level machine learning details from the parser implementation. The result is a simple parser which robustly applies to many languages that provides similar statistical and computational performance with best-to-date transition-based parsing approaches, while avoiding various downsides including randomization, extra feature requirements, and custom learning algorithms.

Bib Entry

@inproceedings{chang2015learning,

author = {Chang, Kai-Wei and He, He and III, Hal Daume; and Lanford, John},

title = {Learning to Search for Dependencies},

booktitle = {Arxiv},

year = {2015}

}

Related Publications

- Robust Text Classifier on Test-Time Budgets, EMNLP (short), 2019

- Efficient Contextual Representation Learning With Continuous Outputs, TACL, 2019

- Distributed Block-diagonal Approximation Methods for Regularized Empirical Risk Minimization, Machine Learning Journal, 2019

- Structured Prediction with Test-time Budget Constraints, AAAI, 2017

- A Credit Assignment Compiler for Joint Prediction, NeurIPS, 2016

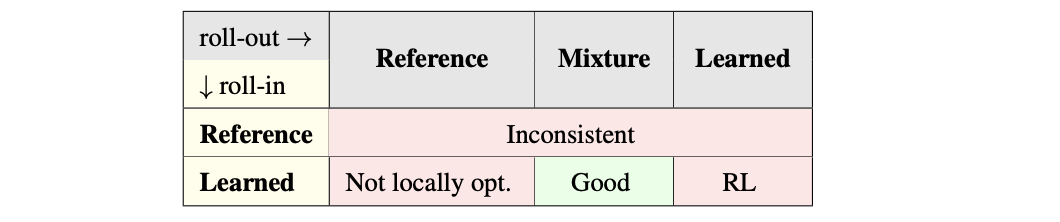

- Learning to Search Better Than Your Teacher, ICML, 2015

- Structural Learning with Amortized Inference, AAAI, 2015