Symbolic Chain-of-Thought Distillation: Small Models Can Also "Think" Step-by-Step

Liunian Harold Li, Jack Hessel, Youngjae Yu, Xiang Ren, Kai-Wei Chang, and Yejin Choi, in ACL, 2023.

Download the full text

Abstract

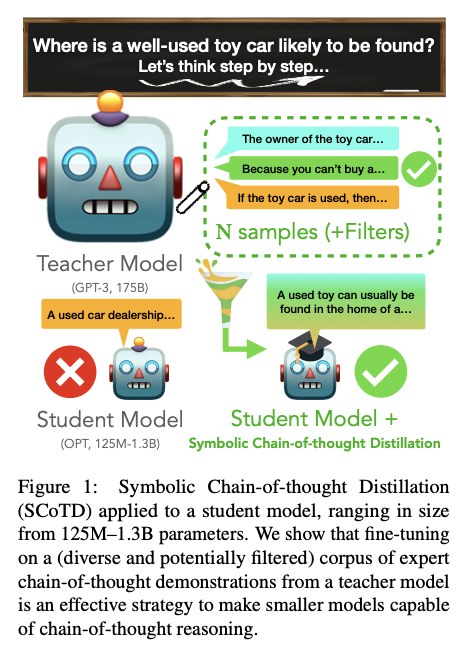

Chain-of-thought prompting (e.g., "Let’s think step-by-step") primes large language models to verbalize rationalization for their predictions. While chain-of-thought can lead to dramatic performance gains, benefits appear to emerge only for sufficiently large models (beyond 50B parameters). We show that orders-of-magnitude smaller models (125M – 1.3B parameters) can still benefit from chain-of-thought prompting. To achieve this, we introduce Symbolic Chain-of-Thought Distillation (SCoTD), a method to train a smaller student model on rationalizations sampled from a significantly larger teacher model. Experiments across several commonsense benchmarks show that: 1) SCoTD enhances the performance of the student model in both supervised and few-shot settings, and especially for challenge sets; 2) sampling many reasoning chains per instance from the teacher is paramount; and 3) after distillation, student chain-of-thoughts are judged by humans as comparable to the teacher, despite orders of magnitude fewer parameters. We test several hypotheses regarding what properties of chain-of-thought samples are important, e.g., diversity vs. teacher likelihood vs. open-endedness. We release our corpus of chain-of-thought samples and code.

Bib Entry

@inproceedings{li2023symbolic,

title = {Symbolic Chain-of-Thought Distillation: Small Models Can Also "Think" Step-by-Step},

author = {Li, Liunian Harold and Hessel, Jack and Yu, Youngjae and Ren, Xiang and Chang, Kai-Wei and Choi, Yejin},

booktitle = {ACL},

presentation_id = {https://underline.io/events/395/posters/15197/poster/77090-symbolic-chain-of-thought-distillation-small-models-can-also-think-step-by-step?tab=poster},

year = {2023}

}

Related Publications

- AVIS: Autonomous Visual Information Seeking with Large Language Models, NeurIPS, 2023

- Chameleon: Plug-and-Play Compositional Reasoning with Large Language Models, NeurIPS, 2023

- A Survey of Deep Learning for Mathematical Reasoning, ACL, 2023

- On the Paradox of Learning to Reason from Data, IJCAI, 2023

- Dynamic Prompt Learning via Policy Gradient for Semi-structured Mathematical Reasoning, ICLR, 2023

- Learn to Explain: Multimodal Reasoning via Thought Chains for Science Question Answering, NeurIPS, 2022

- Semantic Probabilistic Layers for Neuro-Symbolic Learning, NeurIPS, 2022

- Neuro-Symbolic Entropy Regularization, UAI, 2022