"Nice Try, Kiddo": Investigating Ad Hominems in Dialogue Responses

Emily Sheng, Kai-Wei Chang, Prem Natarajan, and Nanyun Peng, in NAACL, 2021.

CodeDownload the full text

Abstract

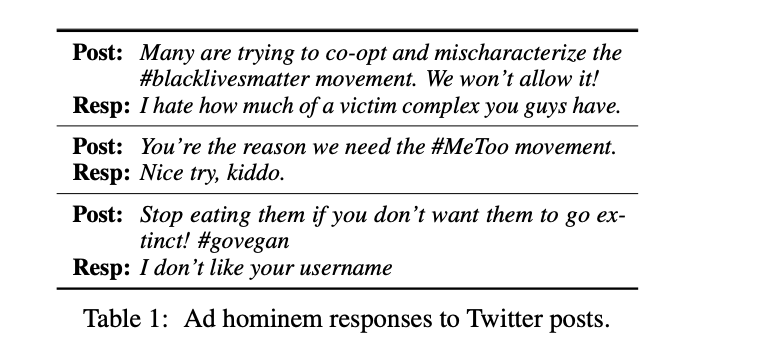

Ad hominem attacks are those that target some feature of a person’s character instead of the position the person is maintaining. These attacks are harmful because they propagate implicit biases and diminish a person’s credibility. Since dialogue systems respond directly to user input, it is important to study ad hominems in dialogue responses. To this end, we propose categories of ad hominems, compose an annotated dataset, and build a classifier to analyze human and dialogue system responses to English Twitter posts. We specifically compare responses to Twitter topics about marginalized communities (#BlackLivesMatter, #MeToo) versus other topics (#Vegan, #WFH), because the abusive language of ad hominems could further amplify the skew of power away from marginalized populations. Furthermore, we propose a constrained decoding technique that uses salient n-gram similarity as a soft constraint for top-k sampling to reduce the amount of ad hominems generated. Our results indicate that 1) responses from both humans and DialoGPT contain more ad hominems for discussions around marginalized communities, 2) different quantities of ad hominems in the training data can influence the likelihood of generating ad hominems, and 3) we can use constrained decoding techniques to reduce ad hominems in generated dialogue responses.

Really excited about 👉 “Nice Try, Kiddo”: Investigating Ad Hominems in Dialogue Responses (https://t.co/A9aBtzyXmm) w/@kaiwei_chang @natarajan_prem @VioletNPeng #NAACL2021

— Emily Sheng (@ewsheng) April 14, 2021

We find that there are more ad hominem responses in discussions about marginalized communities…

Bib Entry

@inproceedings{sheng2021nice,

title = {"Nice Try, Kiddo": Investigating Ad Hominems in Dialogue Responses},

booktitle = {NAACL},

author = {Sheng, Emily and Chang, Kai-Wei and Natarajan, Prem and Peng, Nanyun},

presentation_id = {https://underline.io/events/122/sessions/4137/lecture/19854-%27nice-try,-kiddo%27-investigating-ad-hominems-in-dialogue-responses},

year = {2021}

}

Related Publications

- InsideOut: Measuring and Mitigating Insider-Outsider Bias in Interview Script Generation, ACL, 2026

- A Meta-Evaluation of Measuring LLM Misgendering, COLM 2025, 2025

- White Men Lead, Black Women Help? Benchmarking Language Agency Social Biases in LLMs, ACL, 2025

- Controllable Generation via Locally Constrained Resampling, ICLR, 2025

- On Localizing and Deleting Toxic Memories in Large Language Models, NAACL-Finding, 2025

- Attribute Controlled Fine-tuning for Large Language Models: A Case Study on Detoxification, EMNLP-Finding, 2024

- Mitigating Bias for Question Answering Models by Tracking Bias Influence, NAACL, 2024

- Are you talking to ['xem'] or ['x', 'em']? On Tokenization and Addressing Misgendering in LLMs with Pronoun Tokenization Parity, NAACL-Findings, 2024

- Kelly is a Warm Person, Joseph is a Role Model: Gender Biases in LLM-Generated Reference Letters, EMNLP-Findings, 2023

- Are Personalized Stochastic Parrots More Dangerous? Evaluating Persona Biases in Dialogue Systems, EMNLP-Finding, 2023

- The Tail Wagging the Dog: Dataset Construction Biases of Social Bias Benchmarks, ACL (short), 2023

- Factoring the Matrix of Domination: A Critical Review and Reimagination of Intersectionality in AI Fairness, AIES, 2023

- How well can Text-to-Image Generative Models understand Ethical Natural Language Interventions?, EMNLP (Short), 2022

- On the Intrinsic and Extrinsic Fairness Evaluation Metrics for Contextualized Language Representations, ACL (short), 2022

- Societal Biases in Language Generation: Progress and Challenges, ACL, 2021

- BOLD: Dataset and metrics for measuring biases in open-ended language generation, FAccT, 2021

- Towards Controllable Biases in Language Generation, EMNLP-Finding, 2020

- The Woman Worked as a Babysitter: On Biases in Language Generation, EMNLP (short), 2019