Gender Bias in Coreference Resolution: Evaluation and Debiasing Methods

Jieyu Zhao, Tianlu Wang, Mark Yatskar, Vicente Ordonez, and Kai-Wei Chang, in NAACL (short), 2018.

Top-10 cited paper at NAACL 18

Poster CodeDownload the full text

Abstract

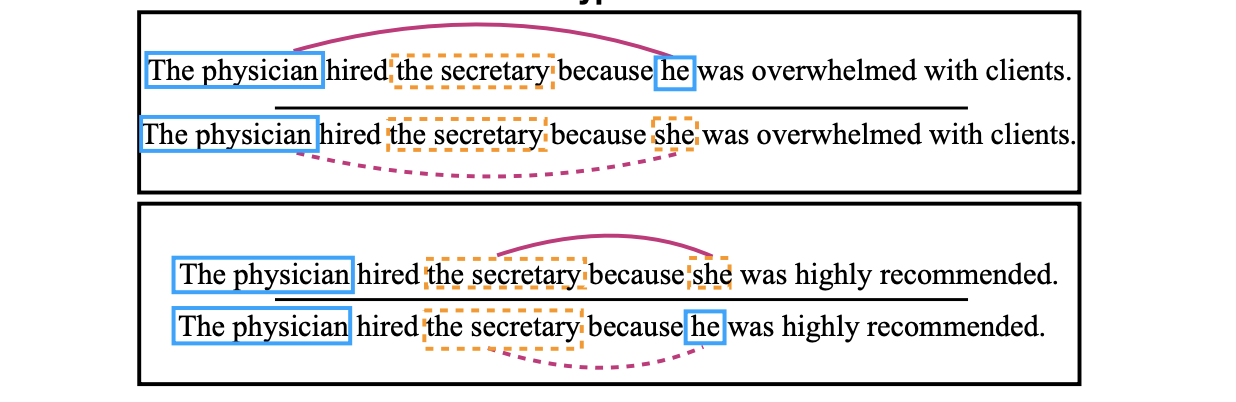

In this paper, we introduce a new benchmark for co-reference resolution focused on gender bias, WinoBias. Our corpus contains Winograd-schema style sentences with entities corresponding to people referred by their occupation (e.g. the nurse, the doctor, the carpenter). We demonstrate that a rule-based, a feature-rich, and a neural coreference system all link gendered pronouns to pro-stereotypical entities with higher accuracy than anti-stereotypical entities, by an average difference of 21.1 in F1 score. Finally, we demonstrate a data-augmentation approach that, in combination with existing word-embedding debiasing techniques, removes the bias demonstrated by these systems in WinoBias without significantly affecting their performance on existing datasets.

#nlphighlights 66: Jieyu Zhao tells us about gender bias in coreference resolution. Another paper in an important line of work on building diagnostic datasets to see what our models are actually doing. Paper with @bluevincent @yatskar @kaiwei_chang. https://t.co/f2jM23Ardn

— Matt Gardner (@nlpmattg) August 20, 2018

Bib Entry

@inproceedings{zhao2018gender,

author = {Zhao, Jieyu and Wang, Tianlu and Yatskar, Mark and Ordonez, Vicente and Chang, Kai-Wei},

title = {Gender Bias in Coreference Resolution: Evaluation and Debiasing Methods},

booktitle = {NAACL (short)},

press_url = {https://www.stitcher.com/podcast/matt-gardner/nlp-highlights/e/55861936},

year = {2018}

}

Related Publications

- Measuring Fairness of Text Classifiers via Prediction Sensitivity, ACL, 2022

- Does Robustness Improve Fairness? Approaching Fairness with Word Substitution Robustness Methods for Text Classification, ACL-Finding, 2021

- LOGAN: Local Group Bias Detection by Clustering, EMNLP (short), 2020

- Towards Understanding Gender Bias in Relation Extraction, ACL, 2020

- Mitigating Gender Bias Amplification in Distribution by Posterior Regularization, ACL (short), 2020

- Mitigating Gender in Natural Language Processing: Literature Review, ACL, 2019

- Men Also Like Shopping: Reducing Gender Bias Amplification using Corpus-level Constraints, EMNLP, 2017