Generating Natural Language Adversarial Examples

Moustafa Alzantot, Yash Sharma, Ahmed Elgohary, Bo-Jhang Ho, Mani Srivastava, and Kai-Wei Chang, in EMNLP (short), 2018.

Top-10 cited paper at EMNLP 18

CodeDownload the full text

Abstract

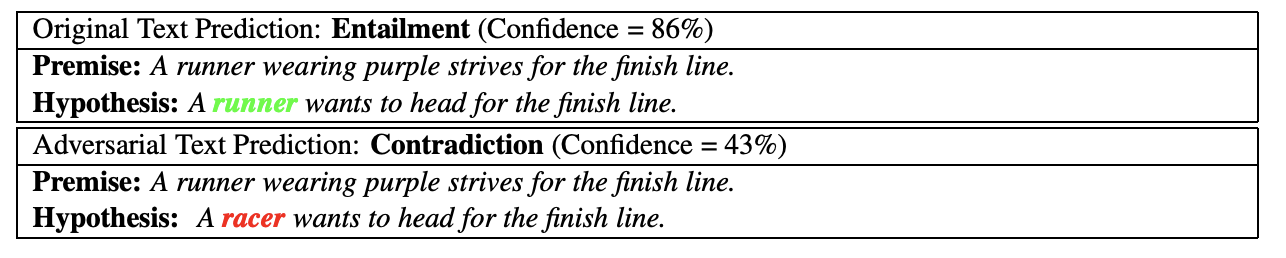

Deep neural networks (DNNs) are vulnerable to adversarial examples, perturbations to correctly classified examples which can cause the network to misclassify. In the image domain, these perturbations can often be made virtually indistinguishable to human perception, causing humans and state-of-the-art models to disagree. However, in the natural language domain, small perturbations are clearly perceptible, and the replacement of a single word can drastically alter the semantics of the document. Given these challenges, we use a population-based optimization algorithm to generate semantically and syntactically similar adversarial examples. We demonstrate via a human study that 94.3% of the generated examples are classified to the original label by human evaluators, and that the examples are perceptibly quite similar. We hope our findings encourage researchers to pursue improving the robustness of DNNs in the natural language domain.

Bib Entry

@inproceedings{alzanto2018generating,

author = {Alzantot, Moustafa and Sharma, Yash and Elgohary, Ahmed and Ho, Bo-Jhang and Srivastava, Mani and Chang, Kai-Wei},

title = {Generating Natural Language Adversarial Examples},

booktitle = {EMNLP (short)},

year = {2018}

}

Related Publications

- VideoCon: Robust video-language alignment via contrast captions, CVPR, 2024

- CleanCLIP: Mitigating Data Poisoning Attacks in Multimodal Contrastive Learning, ICCV, 2023

- Red Teaming Language Model Detectors with Language Models, TACL, 2023

- ADDMU: Detection of Far-Boundary Adversarial Examples with Data and Model Uncertainty Estimation, EMNLP, 2022

- Investigating Ensemble Methods for Model Robustness Improvement of Text Classifiers, EMNLP-Finding (short), 2022

- Unsupervised Syntactically Controlled Paraphrase Generation with Abstract Meaning Representations, EMNLP-Finding (short), 2022

- Improving the Adversarial Robustness of NLP Models by Information Bottleneck, ACL-Finding, 2022

- Searching for an Effiective Defender: Benchmarking Defense against Adversarial Word Substitution, EMNLP, 2021

- On the Transferability of Adversarial Attacks against Neural Text Classifier, EMNLP, 2021

- Defense against Synonym Substitution-based Adversarial Attacks via Dirichlet Neighborhood Ensemble, ACL, 2021

- Double Perturbation: On the Robustness of Robustness and Counterfactual Bias Evaluation, NAACL, 2021

- Provable, Scalable and Automatic Perturbation Analysis on General Computational Graphs, NeurIPS, 2020

- On the Robustness of Language Encoders against Grammatical Errors, ACL, 2020

- Robustness Verification for Transformers, ICLR, 2020

- Learning to Discriminate Perturbations for Blocking Adversarial Attacks in Text Classification, EMNLP, 2019

- Retrofitting Contextualized Word Embeddings with Paraphrases, EMNLP (short), 2019