Co-training Embeddings of Knowledge Graphs and Entity Descriptions for Cross-lingual Entity Alignment

Muhao Chen, Yingtao Tian, Kai-Wei Chang, Steven Skiena, and Carlo Zaniolo, in IJCAI, 2018.

Slides CodeDownload the full text

Abstract

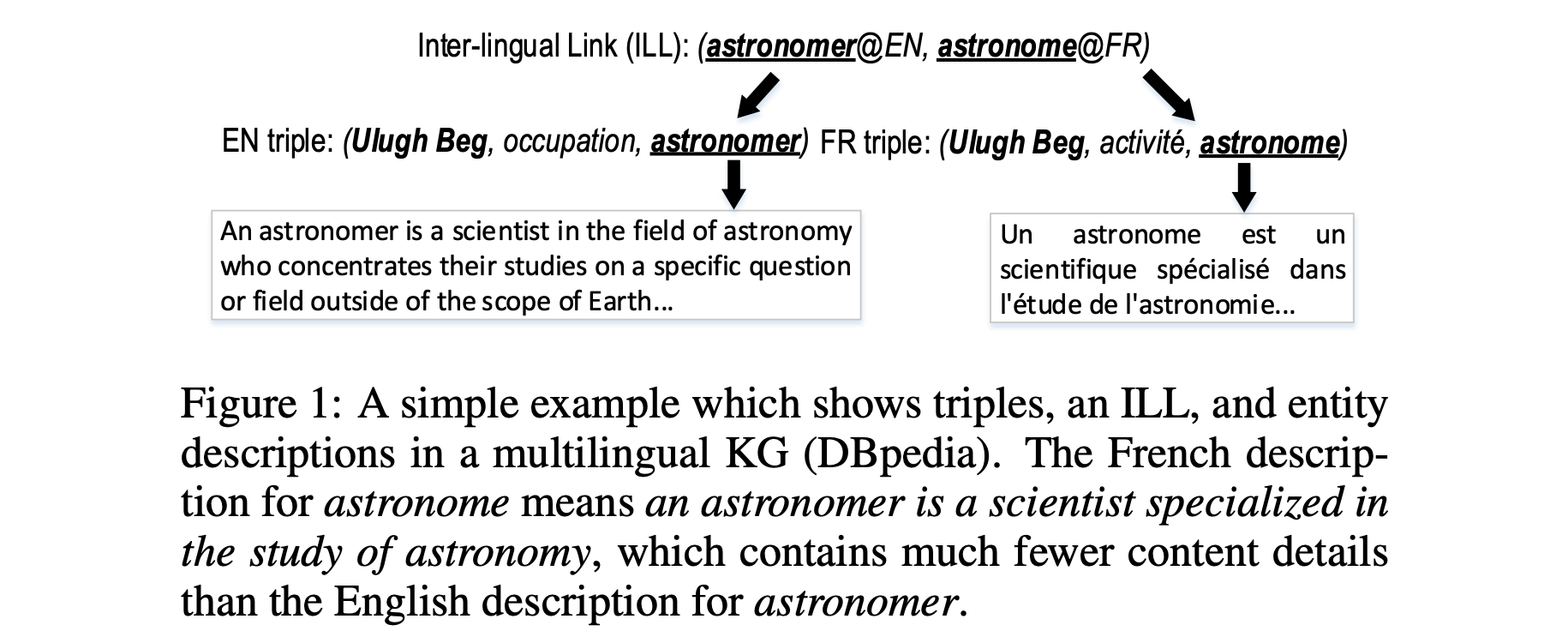

Multilingual knowledge graph (KG) embeddings provide latent semantic representations of entities and structured knowledge enabled with cross-lingual inferences that benefit various knowledge-driven cross-lingual NLP tasks. However, precisely learning such cross-lingual inferences is usually hindered by the low coverage of entity alignment in many KGs. Since many multilingual KGs also provide literal descriptions of entities, in this paper, we introduce an embedding-based approach which leverages a weakly aligned multilingual KG for semi-supervised cross-lingual learning using entity descriptions. Our approach performs co-training of two embedding models, i.e. a multilingual KG embedding model and a multilingual literal description embedding model. The models are trained on a large Wikipedia-based trilingual dataset where most entity alignment is unknown to training. Experimental results show that the performance of the proposed approach on the entity alignment task improves at each iteration of co-training, and eventually reaches a stage at which it significantly surpasses previous approaches. We also show that our approach has promising abilities for zero-shot entity alignment, and cross-lingual KG completion.

Bib Entry

@inproceedings{chen2018multilingual,

author = {Chen, Muhao and Tian, Yingtao and Chang, Kai-Wei and Skiena, Steven and Zaniolo, Carlo},

title = {Co-training Embeddings of Knowledge Graphs and Entity Descriptions for Cross-lingual Entity Alignment},

booktitle = {IJCAI},

year = {2018}

}

Related Publications

- Control Large Language Models via Divide and Conquer, EMNLP, 2024

- Re-ReST: Reflection-Reinforced Self-Training for Language Agents, EMNLP, 2024

- Agent Lumos: Unified and Modular Training for Open-Source Language Agents, ACL, 2024

- Characterizing Truthfulness in Large Language Model Generations with Local Intrinsic Dimension, ICML, 2024

- TrustLLM: Trustworthiness in Large Language Models, ICML, 2024

- The steerability of large language models toward data-driven personas, NAACL, 2024

- AI-Assisted Summarization of Radiologic Reports: Evaluating GPT3davinci, BARTcnn, LongT5booksum, LEDbooksum, LEDlegal, and LEDclinical, American Journal of Neuroradiology, 2024

- Understanding and Mitigating Spurious Correlations in Text Classification with Neighborhood Analysis, EACL-Findings, 2024

- Few-Shot Representation Learning for Out-Of-Vocabulary Words, ACL, 2019

- Learning Word Embeddings for Low-resource Languages by PU Learning, NAACL, 2018

- Beyond Bilingual: Multi-sense Word Embeddings using Multilingual Context, ACL RepL4NLP Workshop, 2017