Few-Shot Representation Learning for Out-Of-Vocabulary Words

Ziniu Hu, Ting Chen, Kai-Wei Chang, and Yizhou Sun, in ACL, 2019.

Poster CodeDownload the full text

Abstract

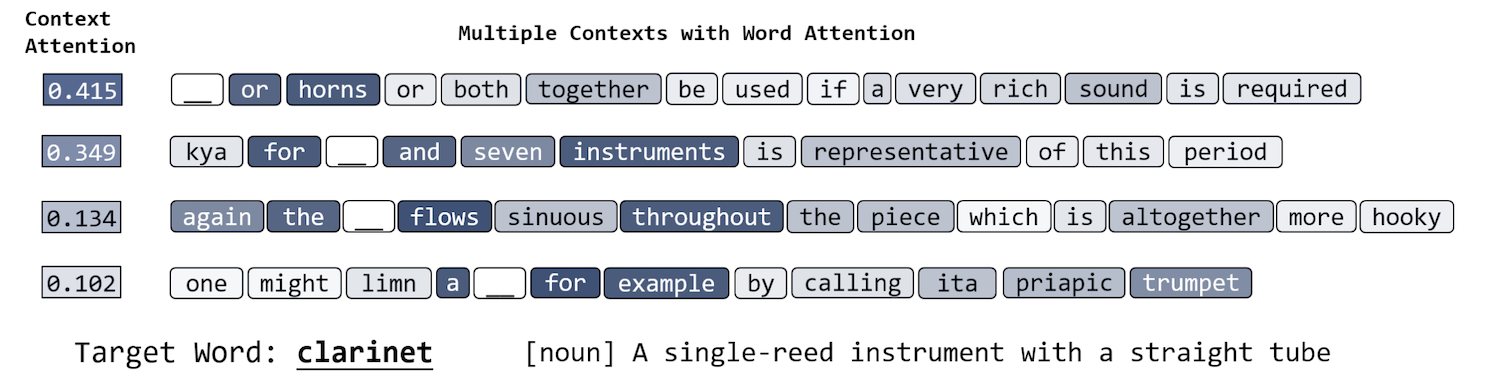

Existing approaches for learning word embeddings often assume there are sufficient occurrences for each word in the corpus, such that the representation of words can be accurately estimated from their contexts. However, in real-world scenarios, out-of-vocabulary (a.k.a. OOV) words that do not appear in training corpus emerge frequently. It is challenging to learn accurate representations of these words with only a few observations. In this paper, we formulate the learning of OOV embeddings as a few-shot regression problem, and address it by training a representation function to predict the oracle embedding vector (defined as embedding trained with abundant observations) based on limited observations. Specifically, we propose a novel hierarchical attention-based architecture to serve as the neural regression function, with which the context information of a word is encoded and aggregated from K observations. Furthermore, our approach can leverage Model-Agnostic Meta-Learning (MAML) for adapting the learned model to the new corpus fast and robustly. Experiments show that the proposed approach significantly outperforms existing methods in constructing accurate embeddings for OOV words, and improves downstream tasks where these embeddings are utilized.

Bib Entry

@inproceedings{hu2019fewshot,

author = {Hu, Ziniu and Chen, Ting and Chang, Kai-Wei and Sun, Yizhou},

title = {Few-Shot Representation Learning for Out-Of-Vocabulary Words},

booktitle = {ACL},

year = {2019}

}

Related Publications

- Control Large Language Models via Divide and Conquer, EMNLP, 2024

- Re-ReST: Reflection-Reinforced Self-Training for Language Agents, EMNLP, 2024

- Agent Lumos: Unified and Modular Training for Open-Source Language Agents, ACL, 2024

- Characterizing Truthfulness in Large Language Model Generations with Local Intrinsic Dimension, ICML, 2024

- TrustLLM: Trustworthiness in Large Language Models, ICML, 2024

- The steerability of large language models toward data-driven personas, NAACL, 2024

- AI-Assisted Summarization of Radiologic Reports: Evaluating GPT3davinci, BARTcnn, LongT5booksum, LEDbooksum, LEDlegal, and LEDclinical, American Journal of Neuroradiology, 2024

- Understanding and Mitigating Spurious Correlations in Text Classification with Neighborhood Analysis, EACL-Findings, 2024

- Learning Word Embeddings for Low-resource Languages by PU Learning, NAACL, 2018

- Co-training Embeddings of Knowledge Graphs and Entity Descriptions for Cross-lingual Entity Alignment, IJCAI, 2018

- Beyond Bilingual: Multi-sense Word Embeddings using Multilingual Context, ACL RepL4NLP Workshop, 2017