DesCo: Learning Object Recognition with Rich Language Descriptions

Liunian Harold Li, Zi-Yi Dou, Nanyun Peng, and Kai-Wei Chang, in NeurIPS, 2023.

Ranks 1st at the #OmniLabel Challenge of CVPR2023

DemoDownload the full text

Abstract

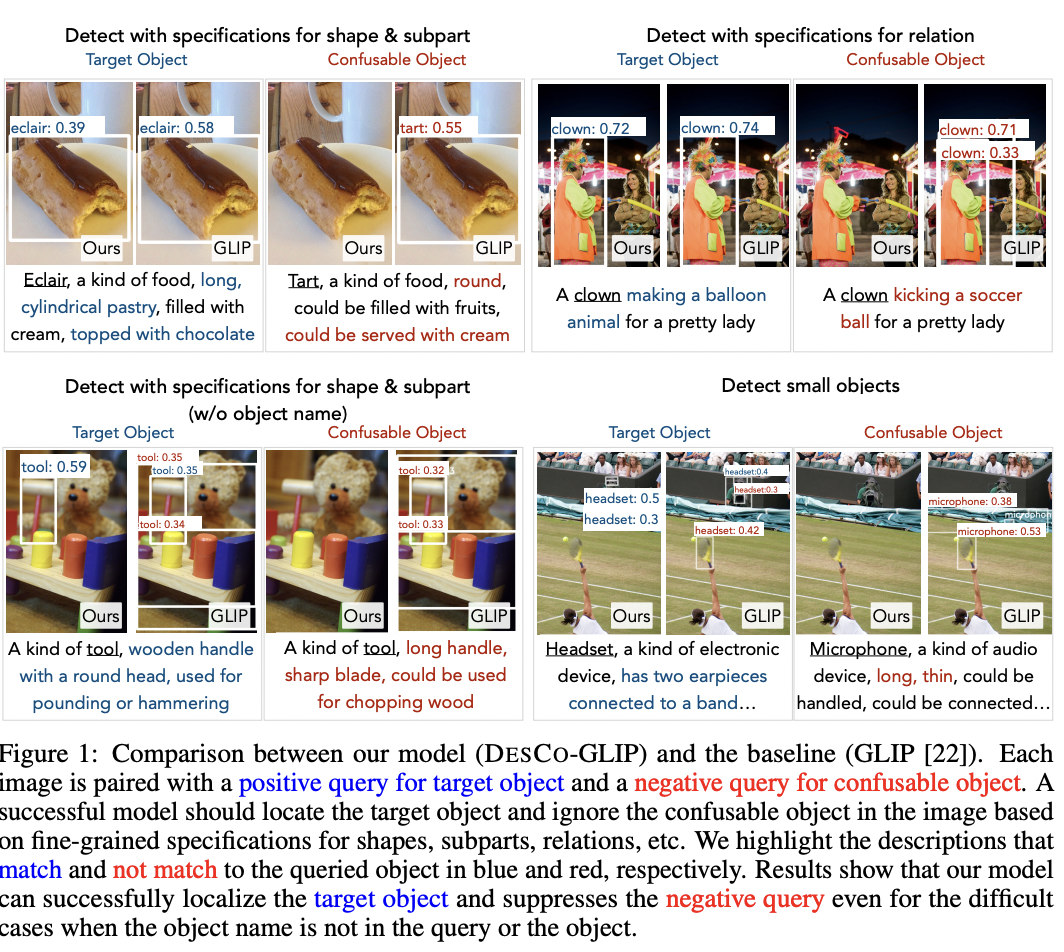

Recent development in vision-language approaches has instigated a paradigm shift in learning visual recognition models from language supervision. These approaches align objects with language queries (e.g. "a photo of a cat") and improve the models’ adaptability to identify novel objects and domains. Recently, several studies have attempted to query these models with complex language expressions that include specifications of fine-grained semantic details, such as attributes, shapes, textures, and relations. However, simply incorporating language descriptions as queries does not guarantee accurate interpretation by the models. In fact, our experiments show that GLIP, the state-of-the-art vision-language model for object detection, often disregards contextual information in the language descriptions and instead relies heavily on detecting objects solely by their names. To tackle the challenges, we propose a new description-conditioned (DesCo) paradigm of learning object recognition models with rich language descriptions consisting of two major innovations: 1) we employ a large language model as a commonsense knowledge engine to generate rich language descriptions of objects based on object names and the raw image-text caption; 2) we design context-sensitive queries to improve the model’s ability in deciphering intricate nuances embedded within descriptions and enforce the model to focus on context rather than object names alone. On two novel object detection benchmarks, LVIS and OminiLabel, under the zero-shot detection setting, our approach achieves 34.8 APr minival (+9.1) and 29.3 AP (+3.6), respectively, surpassing the prior state-of-the-art models, GLIP and FIBER, by a large margin.

Excited to share our new work DesCo (https://t.co/5rk0ZyhFaZ) -- an instructing object detector that takes complex language descriptions (e.g., attributes & relations).

— Liunian Harold Li (@LiLiunian) July 5, 2023

DesCo improves zero-shot detection (+9.1 APr on LVIS) and ranks 1st at the #OmniLabel Challenge of CVPR2023! pic.twitter.com/OVNCJahD9Z

Bib Entry

@inproceedings{li2023desco,

author = {Li, Liunian Harold and Dou, Zi-Yi and Peng, Nanyun and Chang, Kai-Wei},

title = {DesCo: Learning Object Recognition with Rich Language Descriptions},

booktitle = {NeurIPS},

year = {2023}

}

Related Publications

- VisRet: Visualization Improves Knowledge-Intensive Text-to-Image Retrieval, ACL, 2026

- HoneyBee: Data Recipes for Vision-Language Reasoners, CVPR, 2026

- MotionEdit: Benchmarking and Learning Motion-Centric Image Editing, CVPR, 2026

- LaViDa: A Large Diffusion Language Model for Multimodal Understanding, NeurIPS, 2025

- PARTONOMY: Large Multimodal Models with Part-Level Visual Understanding, NeurIPS, 2025

- STIV: Scalable Text and Image Conditioned Video Generation, ICCV, 2025

- Verbalized Representation Learning for Interpretable Few-Shot Generalization, ICCV, 2025

- Contrastive Visual Data Augmentation, ICML, 2025

- SYNTHIA: Novel Concept Design with Affordance Composition, ACL, 2025

- SlowFast-VGen: Slow-Fast Learning for Action-Driven Long Video Generation, ICLR, 2025

- Towards a holistic framework for multimodal LLM in 3D brain CT radiology report generation, Nature Communications, 2025

- Enhancing Large Vision Language Models with Self-Training on Image Comprehension, NeurIPS, 2024

- CoBIT: A Contrastive Bi-directional Image-Text Generation Model, ICLR, 2024

- "What's 'up' with vision-language models? Investigating their struggle to understand spatial relations.", EMNLP, 2023

- Text Encoders are Performance Bottlenecks in Contrastive Vision-Language Models, EMNLP, 2023

- MetaVL: Transferring In-Context Learning Ability From Language Models to Vision-Language Models, ACL (short), 2023

- REVEAL: Retrieval-Augmented Visual-Language Pre-Training with Multi-Source Multimodal Knowledge, CVPR, 2023

- Grounded Language-Image Pre-training, CVPR, 2022

- How Much Can CLIP Benefit Vision-and-Language Tasks?, ICLR, 2022