Balanced Datasets Are Not Enough: Estimating and Mitigating Gender Bias in Deep Image Representations

Tianlu Wang, Jieyu Zhao, Mark Yatskar, Kai-Wei Chang, and Vicente Ordonez, in ICCV, 2019.

Code DemoDownload the full text

Abstract

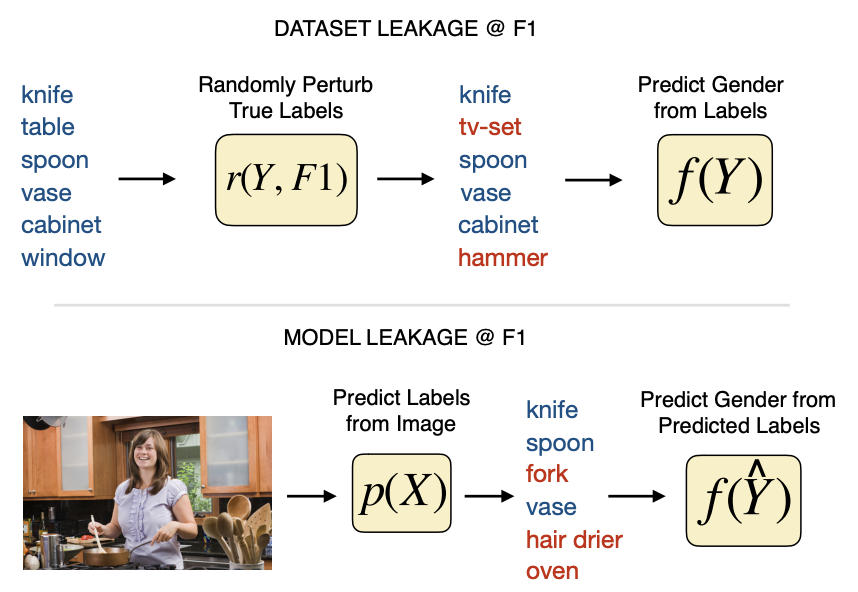

In this work, we present a framework to measure and mitigate intrinsic biases with respect to protected variables –such as gender– in visual recognition tasks. We show that trained models significantly amplify the association of target labels with gender beyond what one would expect from biased datasets. Surprisingly, we show that even when datasets are balanced such that each label co-occurs equally with each gender, learned models amplify the association between labels and gender, as much as if data had not been balanced! To mitigate this, we adopt an adversarial approach to remove unwanted features corresponding to protected variables from intermediate representations in a deep neural network – and provide a detailed analysis of its effectiveness. Experiments on two datasets: the COCO dataset (objects), and the imSitu dataset (actions), show reductions in gender bias amplification while maintaining most of the accuracy of the original models.

(1/6) Our new findings on gender bias in Computer Vision: https://t.co/mfywaWnlkc. Some task setups will inherently leak gender just by the way in which things are distributed in the dataset and its annotations (w/ Tianlu Wang, Jieyu Zhao @kaiwei_chang @bluevincent)... pic.twitter.com/l99Z5sCxD6

— Mark Yatskar (@yatskar) December 4, 2018

Bib Entry

@inproceedings{wang2019balanced,

author = {Wang, Tianlu and Zhao, Jieyu and Yatskar, Mark and Chang, Kai-Wei and Ordonez, Vicente},

title = {Balanced Datasets Are Not Enough: Estimating and Mitigating Gender Bias in Deep Image Representations},

booktitle = {ICCV},

year = {2019}

}

Related Publications

- Mitigating Gender Bias in Distilled Language Models via Counterfactual Role Reversal, ACL Finding, 2022

- Harms of Gender Exclusivity and Challenges in Non-Binary Representation in Language Technologies, EMNLP, 2021

- Gender Bias in Multilingual Embeddings and Cross-Lingual Transfer, ACL, 2020

- Examining Gender Bias in Languages with Grammatical Gender, EMNLP, 2019

- Gender Bias in Contextualized Word Embeddings, NAACL (short), 2019

- Learning Gender-Neutral Word Embeddings, EMNLP (short), 2018

- Man is to Computer Programmer as Woman is to Homemaker? Debiasing Word Embeddings, NeurIPS, 2016