Double Perturbation: On the Robustness of Robustness and Counterfactual Bias Evaluation

Chong Zhang, Jieyu Zhao, Huan Zhang, Kai-Wei Chang, and Cho-Jui Hsieh, in NAACL, 2021.

CodeDownload the full text

Abstract

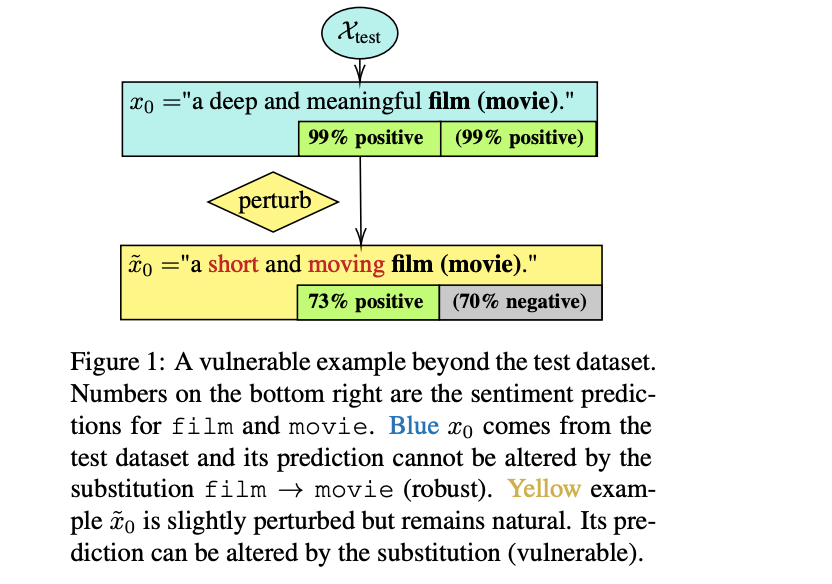

Robustness and counterfactual bias are usually evaluated on a test dataset. However, are these evaluations robust? If the test dataset is perturbed slightly, will the evaluation results keep the same? In this paper, we propose a "double perturbation" framework to uncover model weaknesses beyond the test dataset. The framework first perturbs the test dataset to construct abundant natural sentences similar to the test data, and then diagnoses the prediction change regarding a single-word substitution. We apply this framework to study two perturbation-based approaches that are used to analyze models’ robustness and counterfactual bias in English. (1) For robustness, we focus on synonym substitutions and identify vulnerable examples where prediction can be altered. Our proposed attack attains high success rates (96.0%-99.8%) in finding vulnerable examples on both original and robustly trained CNNs and Transformers. (2) For counterfactual bias, we focus on substituting demographic tokens (e.g., gender, race) and measure the shift of the expected prediction among constructed sentences. Our method is able to reveal the hidden model biases not directly shown in the test dataset.

Prior studies often test model robustness by applying semantic-invariant perturbation on a given test set. In our #NAACL2021 “Double Perturbation: On the Robustness of Robustness and Counterfactual Bias Evaluation”, we propose a new framework for robustness verification. 1/n pic.twitter.com/h4V1dKhYXL

— Jieyu Zhao (@jieyuzhao11) June 5, 2021

Bib Entry

@inproceedings{zhang2021double,

title = { Double Perturbation: On the Robustness of Robustness and Counterfactual Bias Evaluation},

booktitle = {NAACL},

author = {Zhang, Chong and Zhao, Jieyu and Zhang, Huan and Chang, Kai-Wei and Hsieh, Cho-Jui},

year = {2021},

presentation_id = {https://underline.io/events/122/sessions/4229/lecture/19609-double-perturbation-on-the-robustness-of-robustness-and-counterfactual-bias-evaluation}

}

Related Publications

- VideoCon: Robust video-language alignment via contrast captions, CVPR, 2024

- CleanCLIP: Mitigating Data Poisoning Attacks in Multimodal Contrastive Learning, ICCV, 2023

- Red Teaming Language Model Detectors with Language Models, TACL, 2023

- ADDMU: Detection of Far-Boundary Adversarial Examples with Data and Model Uncertainty Estimation, EMNLP, 2022

- Investigating Ensemble Methods for Model Robustness Improvement of Text Classifiers, EMNLP-Finding (short), 2022

- Unsupervised Syntactically Controlled Paraphrase Generation with Abstract Meaning Representations, EMNLP-Finding (short), 2022

- Improving the Adversarial Robustness of NLP Models by Information Bottleneck, ACL-Finding, 2022

- Searching for an Effiective Defender: Benchmarking Defense against Adversarial Word Substitution, EMNLP, 2021

- On the Transferability of Adversarial Attacks against Neural Text Classifier, EMNLP, 2021

- Defense against Synonym Substitution-based Adversarial Attacks via Dirichlet Neighborhood Ensemble, ACL, 2021

- Provable, Scalable and Automatic Perturbation Analysis on General Computational Graphs, NeurIPS, 2020

- On the Robustness of Language Encoders against Grammatical Errors, ACL, 2020

- Robustness Verification for Transformers, ICLR, 2020

- Learning to Discriminate Perturbations for Blocking Adversarial Attacks in Text Classification, EMNLP, 2019

- Retrofitting Contextualized Word Embeddings with Paraphrases, EMNLP (short), 2019

- Generating Natural Language Adversarial Examples, EMNLP (short), 2018