Learning Gender-Neutral Word Embeddings

Jieyu Zhao, Yichao Zhou, Zeyu Li, Wei Wang, and Kai-Wei Chang, in EMNLP (short), 2018.

CodeDownload the full text

Abstract

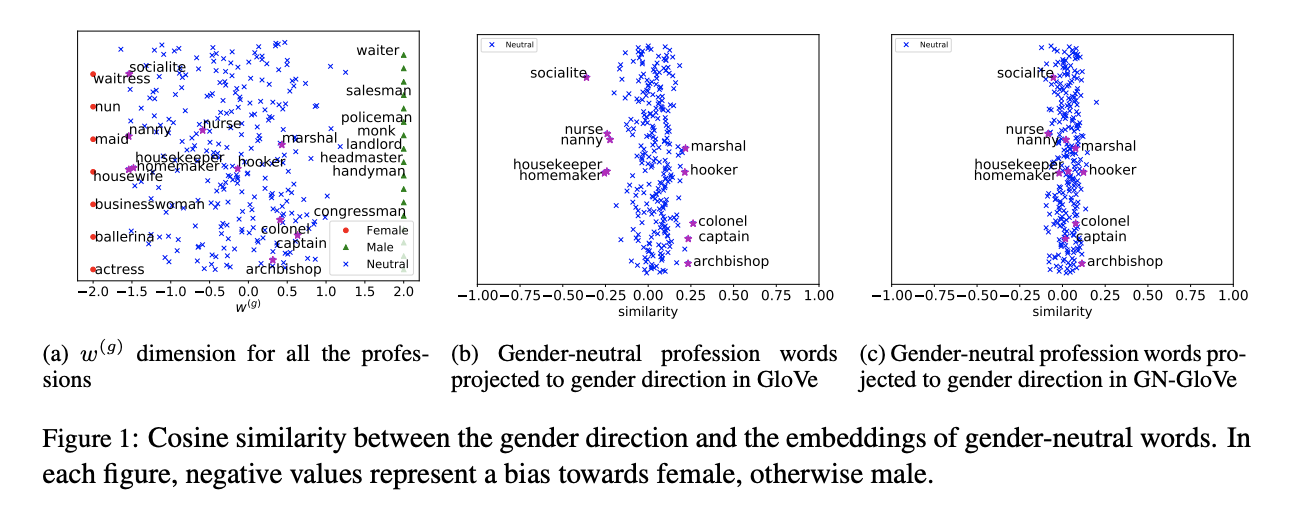

Word embeddings have become a fundamental component in a wide range of Natu-ral Language Processing (NLP) applications.However, these word embeddings trained onhuman-generated corpora inherit strong gen-der stereotypes that reflect social constructs.In this paper, we propose a novel word em-bedding model, De-GloVe, that preserves gen-der information in certain dimensions of wordvectors while compelling other dimensions tobe free of gender influence. Quantitative andqualitative experiments demonstrate that De-GloVe successfully isolates gender informa-tion without sacrificing the functionality of theembedding model.

Bib Entry

@inproceedings{zhao2018learning,

author = {Zhao, Jieyu and Zhou, Yichao and Li, Zeyu and Wang, Wei and Chang, Kai-Wei},

title = {Learning Gender-Neutral Word Embeddings},

booktitle = {EMNLP (short)},

year = {2018}

}

Related Publications

- Mitigating Gender Bias in Distilled Language Models via Counterfactual Role Reversal, ACL Finding, 2022

- Harms of Gender Exclusivity and Challenges in Non-Binary Representation in Language Technologies, EMNLP, 2021

- Gender Bias in Multilingual Embeddings and Cross-Lingual Transfer, ACL, 2020

- Examining Gender Bias in Languages with Grammatical Gender, EMNLP, 2019

- Balanced Datasets Are Not Enough: Estimating and Mitigating Gender Bias in Deep Image Representations, ICCV, 2019

- Gender Bias in Contextualized Word Embeddings, NAACL (short), 2019

- Man is to Computer Programmer as Woman is to Homemaker? Debiasing Word Embeddings, NeurIPS, 2016