Defense against Synonym Substitution-based Adversarial Attacks via Dirichlet Neighborhood Ensemble

Yi Zhou, Xiaoqing Zheng, Cho-Jui Hsieh, Kai-Wei Chang, and Xuanjing Huang, in ACL, 2021.

CodeDownload the full text

Abstract

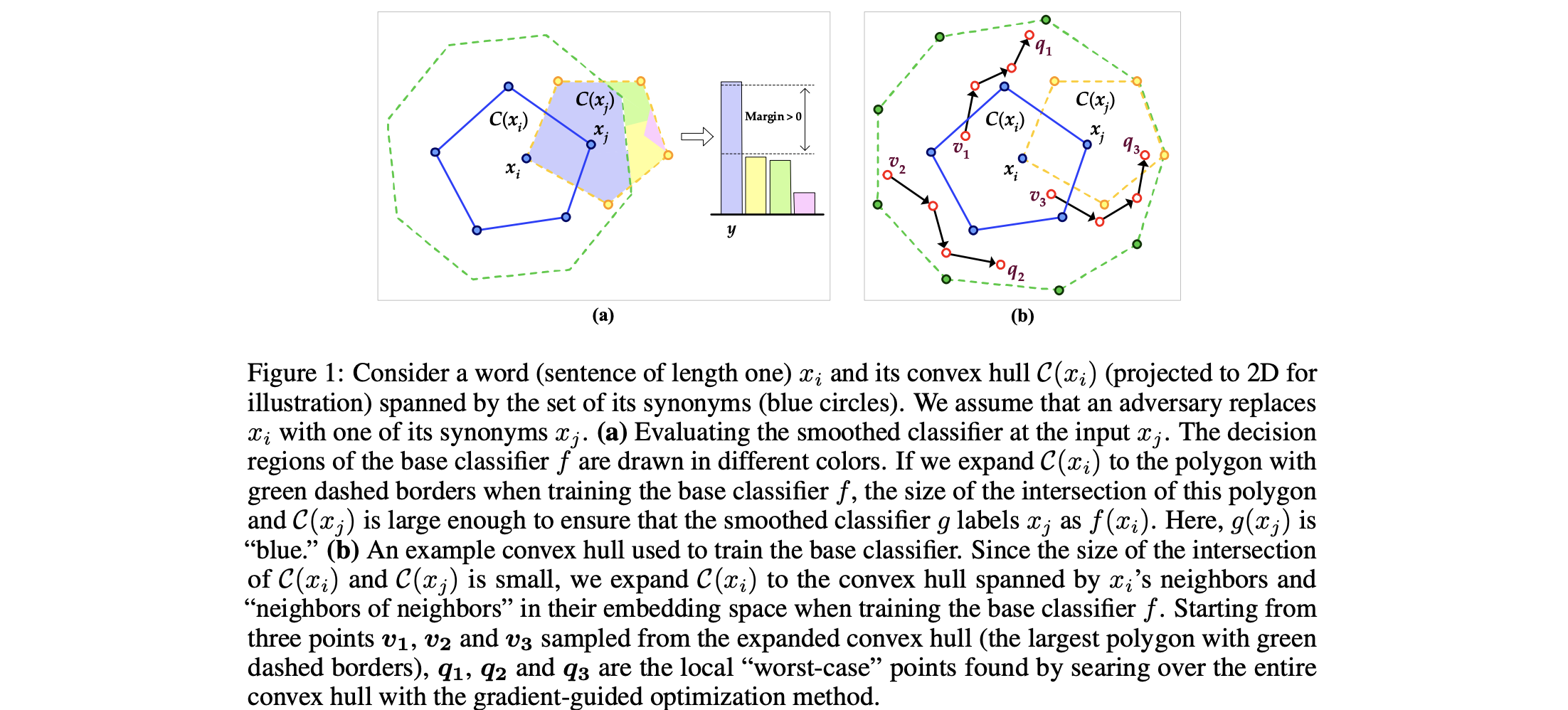

Although deep neural networks have achieved prominent performance on many NLP tasks, they are vulnerable to adversarial examples. We propose Dirichlet Neighborhood Ensemble (DNE), a randomized method for training a robust model to defense synonym substitutionbased attacks. During training, DNE forms virtual sentences by sampling embedding vectors for each word in an input sentence from a convex hull spanned by the word and its synonyms, and it augments them with the training data. In such a way, the model is robust to adversarial attacks while maintaining the performance on the original clean data. DNE is agnostic to the network architectures and scales to large models (e.g., BERT) for NLP applications. Through extensive experimentation, we demonstrate that our method consistently outperforms recently proposed defense methods by a significant margin across different network architectures and multiple data sets.

Bib Entry

@inproceedings{zhou2021defense,

title = {Defense against Synonym Substitution-based Adversarial Attacks via Dirichlet Neighborhood Ensemble},

author = {Zhou, Yi and Zheng, Xiaoqing and Hsieh, Cho-Jui and Chang, Kai-Wei and Huang, Xuanjing},

booktitle = {ACL},

year = {2021}

}

Related Publications

- VideoCon: Robust video-language alignment via contrast captions, CVPR, 2024

- CleanCLIP: Mitigating Data Poisoning Attacks in Multimodal Contrastive Learning, ICCV, 2023

- Red Teaming Language Model Detectors with Language Models, TACL, 2023

- ADDMU: Detection of Far-Boundary Adversarial Examples with Data and Model Uncertainty Estimation, EMNLP, 2022

- Investigating Ensemble Methods for Model Robustness Improvement of Text Classifiers, EMNLP-Finding (short), 2022

- Unsupervised Syntactically Controlled Paraphrase Generation with Abstract Meaning Representations, EMNLP-Finding (short), 2022

- Improving the Adversarial Robustness of NLP Models by Information Bottleneck, ACL-Finding, 2022

- Searching for an Effiective Defender: Benchmarking Defense against Adversarial Word Substitution, EMNLP, 2021

- On the Transferability of Adversarial Attacks against Neural Text Classifier, EMNLP, 2021

- Double Perturbation: On the Robustness of Robustness and Counterfactual Bias Evaluation, NAACL, 2021

- Provable, Scalable and Automatic Perturbation Analysis on General Computational Graphs, NeurIPS, 2020

- On the Robustness of Language Encoders against Grammatical Errors, ACL, 2020

- Robustness Verification for Transformers, ICLR, 2020

- Learning to Discriminate Perturbations for Blocking Adversarial Attacks in Text Classification, EMNLP, 2019

- Retrofitting Contextualized Word Embeddings with Paraphrases, EMNLP (short), 2019

- Generating Natural Language Adversarial Examples, EMNLP (short), 2018