GPT-GNN: Generative Pre-Training of Graph Neural Networks

Ziniu Hu, Yuxiao Dong, Kuansan Wang, Kai-Wei Chang, and Yizhou Sun, in KDD, 2020.

Top-10 cited paper at KDD 20

CodeDownload the full text

Abstract

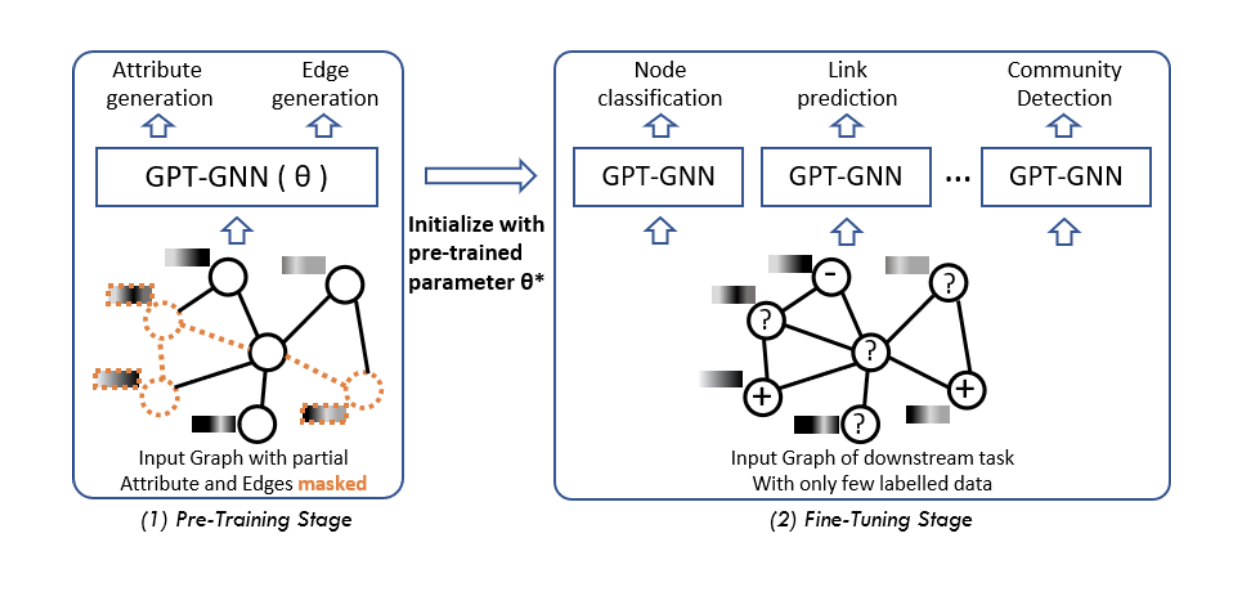

Graph neural networks (GNNs) have been demonstrated to besuccessful in modeling graph-structured data. However, training GNNs requires abundant task-specific labeled data, which is often arduously expensive to obtain. One effective way to reduce labeling effort is to pre-train an expressive GNN model on unlabelled data with self-supervision and then transfer the learned knowledge to downstream models. In this paper, we present the GPT-GNN’s framework to initialize GNNs by generative pre-training. GPT-GNN introduces a self-supervised attributed graph generation task to pre-train a GNN,which allows the GNN to capture the intrinsic structural and semantic properties of the graph. We factorize the likelihood of graph generation into two components: 1) attribute generation, and 2) edgegeneration. By modeling both components, GPT-GNN captures the inherent dependency between node attributes and graph structure during the generative process. Comprehensive experiments on thebillion-scale academic graph and Amazon recommendation data demonstrate that GPT-GNN significantly outperforms state-of-the-art base GNN models without pre-training by up to 9.1% across different downstream tasks.

Bib Entry

@inproceedings{hu2020gptgnn,

author = {Hu, Ziniu and Dong, Yuxiao and Wang, Kuansan and Chang, Kai-Wei and Sun, Yizhou},

title = {GPT-GNN: Generative Pre-Training of Graph Neural Networks},

booktitle = {KDD},

slide_url = {https://acbull.github.io/pdf/gpt.pptx},

year = {2020}

}

Related Publications

- Relation-Guided Pre-Training for Open-Domain Question Answering, EMNLP-Finding, 2021

- An Integer Linear Programming Framework for Mining Constraints from Data, ICML, 2021

- Generating Syntactically Controlled Paraphrases without Using Annotated Parallel Pairs, EACL, 2021

- Clinical Temporal Relation Extraction with Probabilistic Soft Logic Regularization and Global Inference, AAAI, 2021

- PolicyQA: A Reading Comprehension Dataset for Privacy Policies, EMNLP-Finding (short), 2020

- SentiBERT: A Transferable Transformer-Based Architecture for Compositional Sentiment Semantics, ACL, 2020

- Building Language Models for Text with Named Entities, ACL, 2018

- Learning from Explicit and Implicit Supervision Jointly For Algebra Word Problems, EMNLP, 2016