Generating Syntactically Controlled Paraphrases without Using Annotated Parallel Pairs

Kuan-Hao Huang and Kai-Wei Chang, in EACL, 2021.

Slides Poster CodeDownload the full text

Abstract

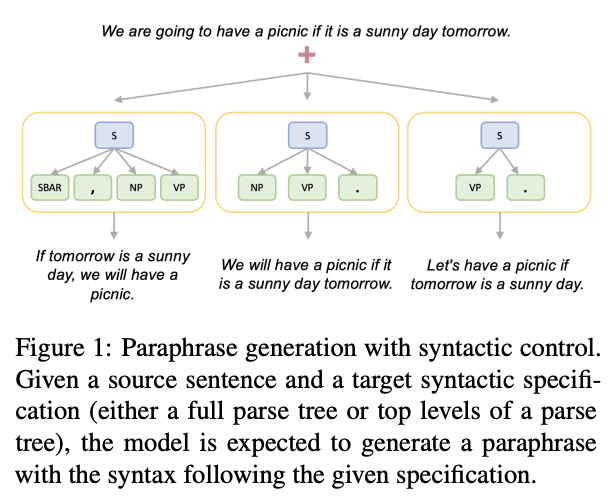

Paraphrase generation plays an essential role in natural language process (NLP), and it has many downstream applications. However, training supervised paraphrase models requires many annotated paraphrase pairs, which are usually costly to obtain. On the other hand, the paraphrases generated by existing unsupervised approaches are usually syntactically similar to the source sentences and are limited in diversity. In this paper, we demonstrate that it is possible to generate syntactically various paraphrases without the need for annotated paraphrase pairs. We propose Syntactically controlled Paraphrase Generator (SynPG), an encoder-decoder based model that learns to disentangle the semantics and the syntax of a sentence from a collection of unannotated texts. The disentanglement enables SynPG to control the syntax of output paraphrases by manipulating the embedding in the syntactic space. Extensive experiments using automatic metrics and human evaluation show that SynPG performs better syntactic control than unsupervised baselines, while the quality of the generated paraphrases is competitive. We also demonstrate that the performance of SynPG is competitive or even better than supervised models when the unannotated data is large. Finally, we show that the syntactically controlled paraphrases generated by SynPG can be utilized for data augmentation to improve the robustness of NLP models.

Can we generate syntactically controlled paraphrase without parallel data? 🧐 Check out our (w/ @kuanhao_ ) recent paper at #EACL2021. We also apply this to improve the robustness of models against syntactically adversarial attacks.🛡️ https://t.co/oOGeFy1Y5Z

— Kai-Wei Chang (@kaiwei_chang) February 10, 2021

Bib Entry

@inproceedings{huang2021generating,

author = {Huang, Kuan-Hao and Chang, Kai-Wei},

title = {Generating Syntactically Controlled Paraphrases without Using Annotated Parallel Pairs},

booktitle = {EACL},

year = {2021}

}

Related Publications

- Relation-Guided Pre-Training for Open-Domain Question Answering, EMNLP-Finding, 2021

- An Integer Linear Programming Framework for Mining Constraints from Data, ICML, 2021

- Clinical Temporal Relation Extraction with Probabilistic Soft Logic Regularization and Global Inference, AAAI, 2021

- PolicyQA: A Reading Comprehension Dataset for Privacy Policies, EMNLP-Finding (short), 2020

- GPT-GNN: Generative Pre-Training of Graph Neural Networks, KDD, 2020

- SentiBERT: A Transferable Transformer-Based Architecture for Compositional Sentiment Semantics, ACL, 2020

- Building Language Models for Text with Named Entities, ACL, 2018

- Learning from Explicit and Implicit Supervision Jointly For Algebra Word Problems, EMNLP, 2016