|

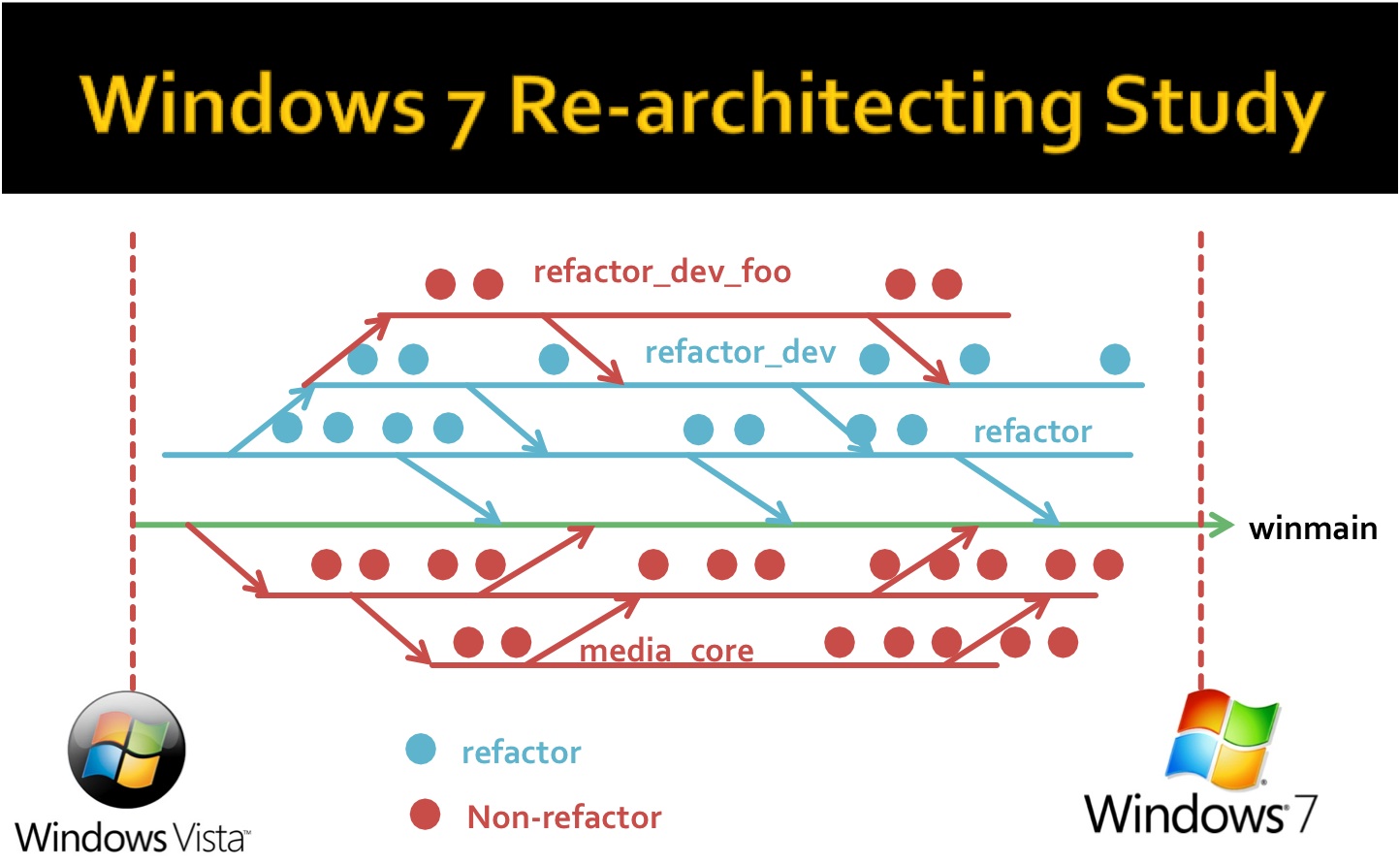

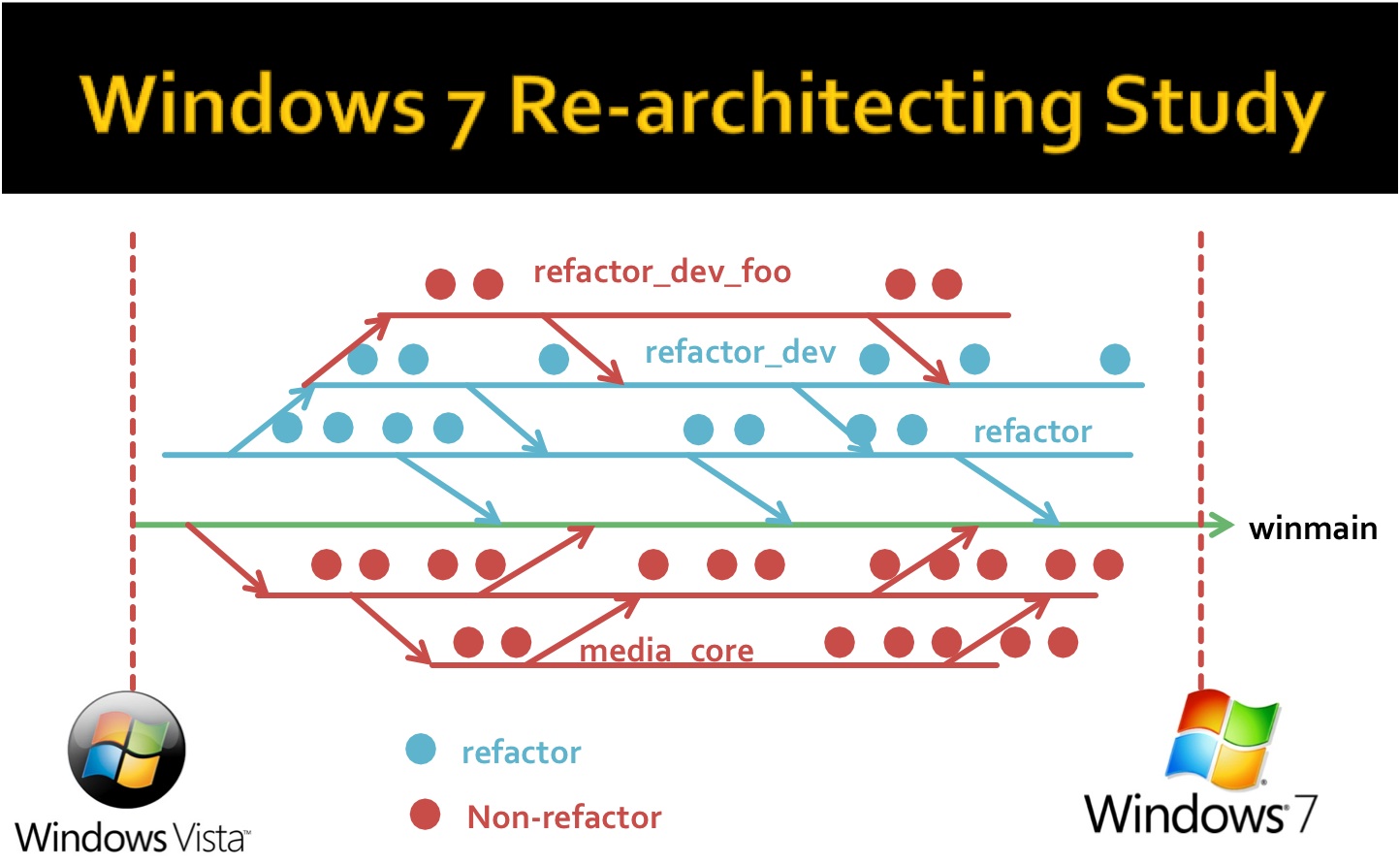

Refactoring is a technique that is used for cleaning up

legacy code for bug fixes or feature additions. To create

a scientific foundation on refactoring, we

quantified the impact of a multi-year Windows

re-architecting effort---we analyzed version history data,

conducted a survey of over 300 developers, and interviewed

the architects and development leads to assess the impact

of refactoring on size, churn, complexity, test coverage,

failure, and organization metrics.

- A Field Study of Refactoring at Microsoft FSE

2012, TSE

2014

- API Refactoring and Bug Fixes ICSE

2011, Nominated for ACM SIGSOFT

Distinguished Paper Award

- API Stability and Adoption Most Influential

Paper Award from ICSME 2013

ICSM

2013,

The following techniques find refactoring bugs

|

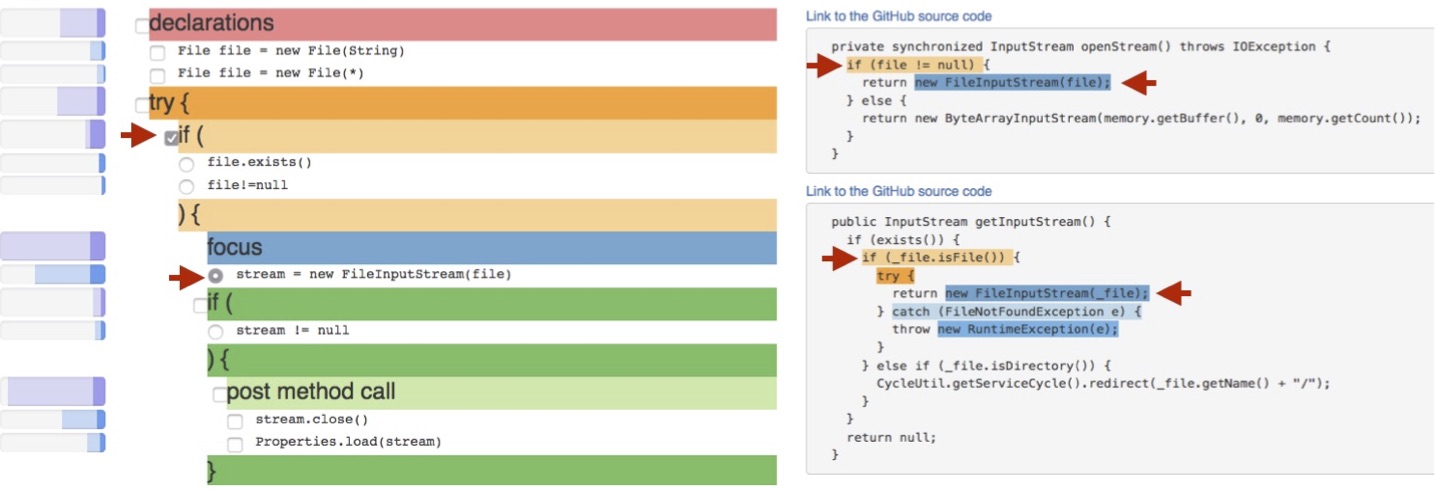

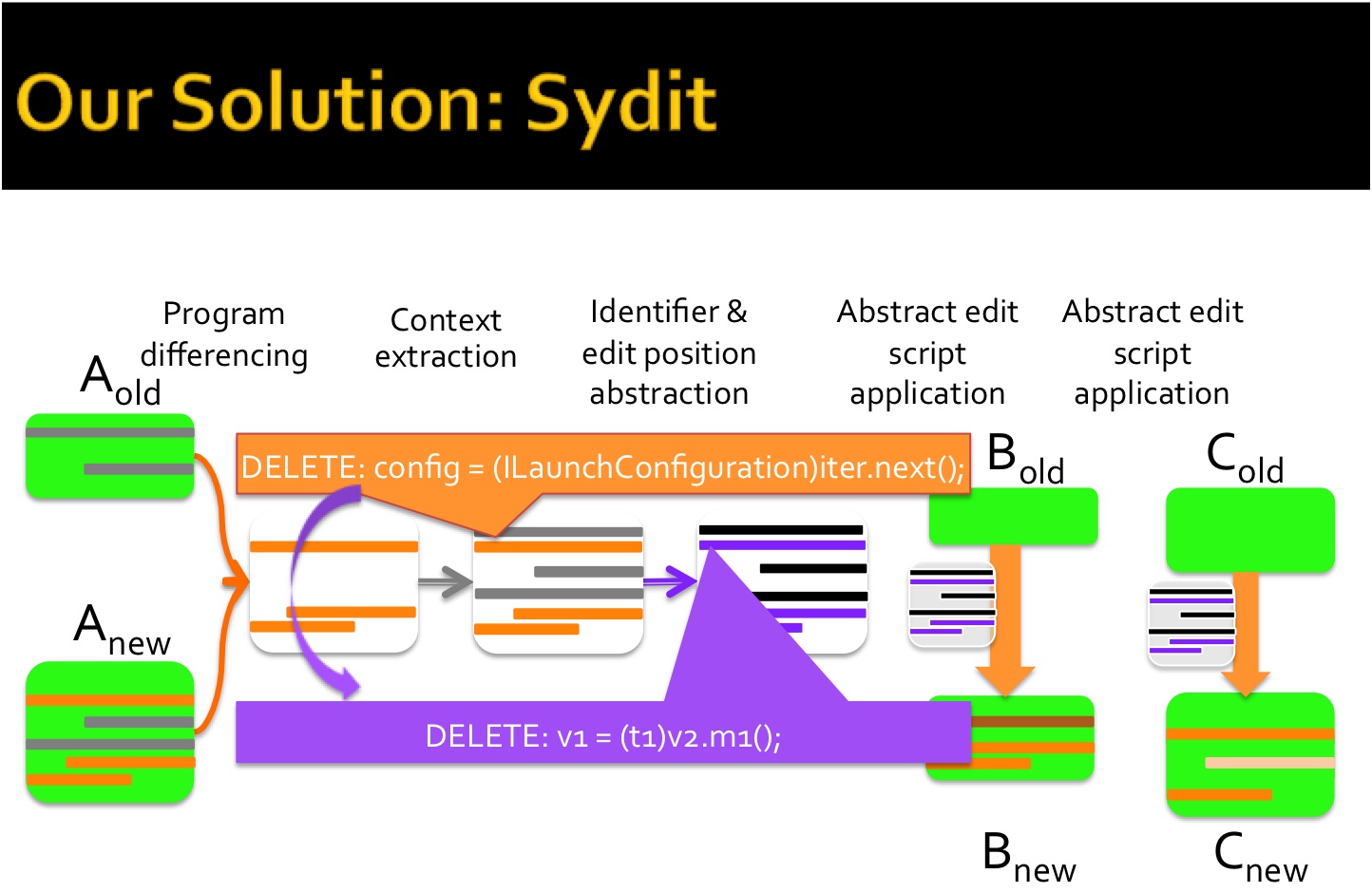

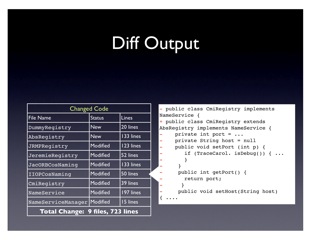

We invented a suite

of analysis tools that can help programmers investigate code

modifications. We also developed RefFinder, a logic-query

approach to refactoring reconstruction. Our insight was that

the skeleton of refactoring edits can be expressed as a

logical constraint.

We invented a suite

of analysis tools that can help programmers investigate code

modifications. We also developed RefFinder, a logic-query

approach to refactoring reconstruction. Our insight was that

the skeleton of refactoring edits can be expressed as a

logical constraint.